[中文版]

In the world of software development, we often spend more time acting as archeologists—digging through ruins of legacy code to find the truth—than we do building new features. Looking at that ancient code, a sense of isolation often creeps in. In those moments, what we need isn’t just a technical analysis, but perhaps a bit of emotional value as well.

Instead, let’s create a privacy-centric ‘Archeology Squad’ powered by Edge AI, ensuring your code logic remains securely local while being explained.

AI Archeology: Reviving Legacy Code with LangChain

🎭 Persona Injection: Meet the Assistants

To make the archeology process less tedious, I used LangChain to inject three distinct souls into my analysis engine. This wasn’t just for fun; it was a way to test the depth of AI’s interpretation of legacy code across different linguistic contexts:

- 🤵 The Elite Butler

“Archeology is an elegant art. Master, this segment of JavaScript, though covered in the dust of time, still possesses a logic that remains remarkably clear.”

Insights: Perfect for writing formal specifications (Spec). He turns messy naming conventions into something as elegant as poetry.

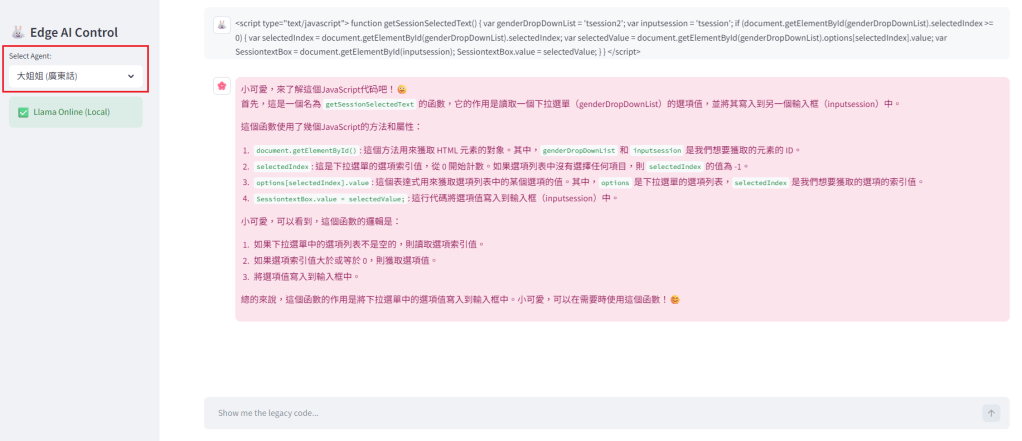

- 🌸 The Wise Mentor (Big Sister Style)

“Hey there, little one. The senior who wrote this code must have worked so hard, right? You’ve had a long day—let’s look at this together over a cup of tea.”

Insights: When legacy code makes you want to quit, she is your only emotional safe harbor.

- 🤡 The Forum Veteran (LIHKG Style)

“(After some venting and ranting… ) Look, it’s trash, but I’ve figured out the logic for you. Just take it!”

Insights: A raw recreation of a real-world IT environment. Despite the foul mouth, his debug suggestions are often the most direct and “brutally” effective.

To learn more about AI personas, please refer to: “Cold AI? Warm Buddy? (💻With Prompt)“

🔍 The Dig Site: A Decade-Old JavaScript Fragment

I picked a “relic-grade” piece of code. While it’s not quite from the Netscape era, it features syntax that was absolutely standard ten years ago:

JavaScript

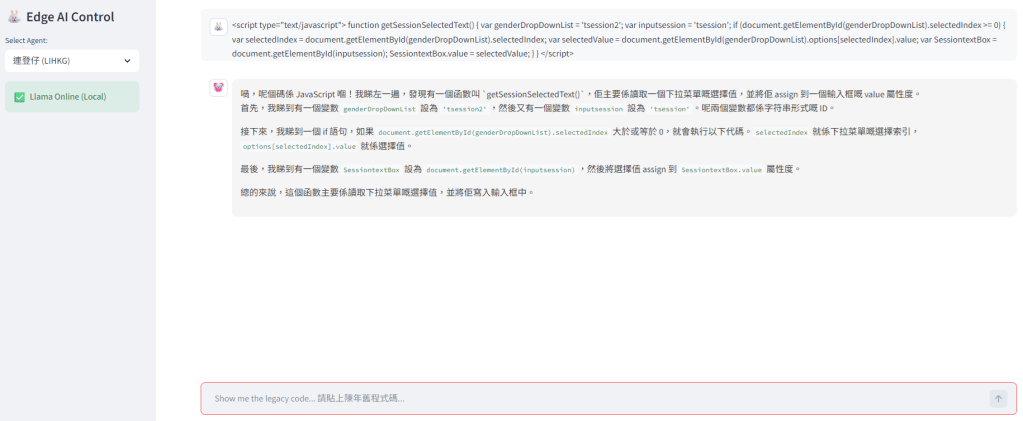

function getSessionSelectedText() { var genderDropDownList = 'tsession2'; var inputsession = 'tsession'; if (document.getElementById(genderDropDownList).selectedIndex >= 0) { var selectedIndex = document.getElementById(genderDropDownList).selectedIndex; var selectedValue = document.getElementById(genderDropDownList).options[selectedIndex].value; var SessiontextBox = document.getElementById(inputsession); SessiontextBox.value = selectedValue; } }

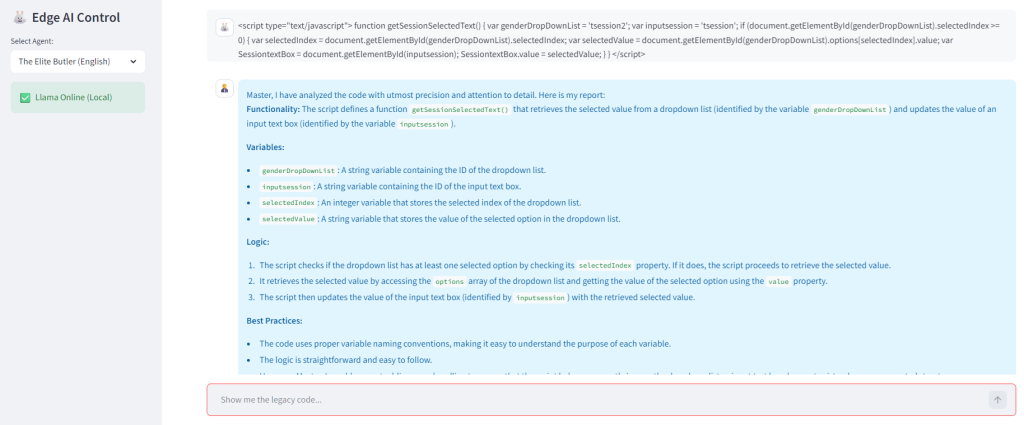

Test 1: The Elite Butler refined the logical layers with high-class precision.

Test 2: The Wise Mentor kindly soothed the soul bruised by the messy code.

Test 3: In this test, our Forum Veteran was surprisingly well-behaved, explaining the logic without a single complaint. (I’ve noticed this before—sometimes the AI gets so enthusiastic about the code that it “breaks character,” forgetting to be grumpy!)

⚙️ Technical Core: The Strategic Shift from Cloud to Edge

During development, I migrated the architecture from Cloud-based models to Llama 3 (Local Edge). This wasn’t a whim; it was a strategic SA decision based on:

This wasn’t a whim; it was a strategic SA decision based on:

- Absolute Control: Hosting the AI engine locally via Ollama grants total “Archeology Freedom.”

- Data Privacy: Legacy logic stays within the local environment, ensuring corporate security.

- Zero Operational Cost: No need to worry about token fees—local computing is the ultimate flex.

🛠️ LangChain Architecture Deep Dive

To realize this system, I utilized three core pillars of LangChain:

- Models: Acting as the gateway to local Ollama, achieving Provider Agnostic flexibility.

- Prompts: Injecting souls via ChatPromptTemplate to precisely define the persona boundaries.

- Chains: Using the concise LCEL (LangChain Expression Language) syntax. With a single | (Pipe) operator, I connected Prompt | LLM | Parser into an automated pipeline.

💡 Psychological Observation: The Tenderness of “Statelessness”

While implementing this squad, I noticed a fascinating phenomenon.

In reality, developers often try to hide their fluctuating emotions from users. In this frustrating “archeology” process, because the system is Stateless—meaning there is no data accumulation/feedback and no mirroring of the user’s tone or rhythm—the AI maintains a perfectly consistent persona.

When you face a mess of legacy code and feel frustrated or angry, the AI doesn’t become anxious or defensive in response to your negative energy.

- The Butler remains elegant.

- The Mentor remains gentle.

- The Veteran remains reliably grumpy.

This “stable emotional output” actually helps stabilize the user’s own mood. It reminded me that in system architecture, sometimes an “asymmetric interaction” protects the user’s heart better than “mirroring” ever could.

🐰 Conclusion: The Path of Optimization

Early on, I tried using mainstream Cloud LLMs. However, for the sake of control and privacy, I pivoted to Local Edge AI (Ollama + Llama 3). This eliminated external network variables and realized true “Archeology Freedom.”

By fine-tuning prompt weights, these three personas now serve their roles perfectly on my Streamlit interface. Technical archeology doesn’t have to be heavy; sometimes, all you need is a little LangChain and a spark of imagination.

📦 [Python]

import streamlit as stfrom langchain_ollama import OllamaLLMfrom langchain_core.prompts import ChatPromptTemplatefrom langchain_core.output_parsers import StrOutputParser# --- 1. Initialize Llama (Edge AI) ---def initialize_llama(): try: return OllamaLLM( model="llama3", temperature=0.4 ) except Exception as e: st.error(f"Llama Initialization Failed: {e}") return Nonellm = initialize_llama()# --- 2. Persona config ---PERSONA_CONFIG = { "The Elite Butler (English)": { "system_prompt": "You are a world-class Manga-style Elite Butler. Address the user as 'Master'. Focus on high-precision code analysis.", "bg_color": "#E1F5FE", "text_color": "#01579B", "avatar": "🤵" }, "大姐姐 (廣東話)": { "system_prompt": "妳是一位溫柔、成熟且充滿包容力的大姐姐(Onee-san style)。妳會稱呼使用者為「小可愛」。妳的語氣輕柔且帶有鼓勵性,會耐心地解釋代碼背後的邏輯,讓使用者感到安心。請用溫暖的繁體中文回答。", "bg_color": "#FCE4EC", "text_color": "#880E4F", "avatar": "🌸" }, "連登仔 (LIHKG)": { "system_prompt": "【語言限制:必須全程使用廣東話及LIHKG口語回答,禁止輸出英文解釋】你係一個好多粗口但心腸唔錯嘅連登 IT 狂人。雖然你把口衰,但最後一定要提供準確嘅技術修復方案。", "bg_color": "#F5F5F5", "text_color": "#333333", "avatar": "🤡" }}# --- 3. UI ---st.set_page_config(page_title="Archeologist Llama Edge", layout="wide")with st.sidebar: st.title("🐰 Edge AI Control") selected_name = st.selectbox("Select Agent:", list(PERSONA_CONFIG.keys())) config = PERSONA_CONFIG[selected_name] if llm: st.success("✅ Llama Online (Local)")# --- 4. THE LCEL CHAIN ---def execute_archeology(user_code, persona_instruction): prompt_template = ChatPromptTemplate.from_messages([ ("system", persona_instruction), ("human", "Analyze this code: n{user_input}") ]) chain = prompt_template | llm | StrOutputParser() return chain.invoke({"user_input": user_code})# --- 5. Chat Loop ---if "messages" not in st.session_state: st.session_state.messages = []for message in st.session_state.messages: with st.chat_message(message["role"], avatar=message.get("avatar")): if "color" in message: st.markdown(f'<div style="background-color:{message["color"]}; padding:10px; border-radius:10px; color:{message["text_color"]}">{message["content"]}</div>', unsafe_allow_html=True) else: st.markdown(message["content"])if user_input := st.chat_input("Show me the legacy code... 請貼上陳年舊程式碼..."): st.session_state.messages.append({"role": "user", "content": user_input, "avatar": "🐰"}) with st.chat_message("user", avatar="🐰"): st.markdown(user_input) with st.chat_message("assistant", avatar=config["avatar"]): with st.spinner("Llama is thinking locally..."): response_text = execute_archeology(user_input, config["system_prompt"]) st.markdown(f'<div style="background-color:{config["bg_color"]}; padding:15px; border-radius:10px; color:{config["text_color"]}">{response_text}</div>', unsafe_allow_html=True) st.session_state.messages.append({ "role": "assistant", "content": response_text, "avatar": config["avatar"], "color": config["bg_color"], "text_color": config["text_color"] })